Cluster: Difference between revisions

No edit summary |

No edit summary |

||

| (50 intermediate revisions by the same user not shown) | |||

| Line 1: | Line 1: | ||

IIHE local cluster | IIHE local cluster | ||

[http://ganglia.iihe.ac.be Ganglia Monitoring] | <center><span style="font-size: 300%;">'''Deprecated''', see [http://t2bwiki.iihe.ac.be T2B wiki]</span></center> | ||

== Overview == | |||

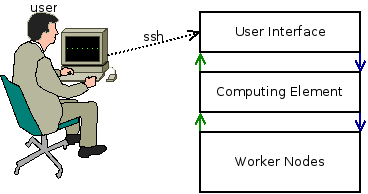

The cluster is composed by 4 machine types :<br> | |||

*User Interfaces (UI)<br> | |||

This is the cluster front-end, to use the cluster, you need to log into those machines<br> | |||

Servers : ui01, ui02<br> | |||

*Computing Element (CE)<br> | |||

This server is the core of the batch system : it run submitted jobs on worker nodes<br> | |||

Servers : ce<br> | |||

*Worker Nodes (WN)<br> | |||

This is the power of the cluster : they run jobs and send the status back to the CE<br> | |||

Servers : slave*<br> | |||

*Storage Elements<br> | |||

This is the memory of the cluster : they contains data, software, ...<br> | |||

Servers : datang (/data, /software), lxserv (/user), x4500 (/ice3) | |||

[[Image:Batch.png|center]] | |||

== How to connect<br> == | |||

To connect to the cluster, you must use your IIHE credentials (same as for wifi)<br> | |||

[[Image:Rrdns.png|center]] | |||

<pre>ssh username@icecube.iihe.ac.be | |||

</pre> | |||

TIP : ''icecube.iihe.ac.be'' & ''lxpub.iihe.ac.be'' points automatically to available UI's (ui01, ui02, ...)<br> | |||

<br> | |||

After a successful login, you'll see this message :<br> | |||

<pre>========================================== | |||

Welcome on the IIHE ULB-VUB cluster | |||

Cluster status http://ganglia.iihe.ac.be | |||

Documentation http://wiki.iihe.ac.be/index.php/Cluster | |||

IT Help support-iihe@ulb.ac.be | |||

========================================== | |||

username@uiXX:~$ | |||

</pre> | |||

Your default current working directory is your home folder.<br> | |||

<br> | |||

== Directory Structure<br> == | |||

Here is a description of most useful directories<br> | |||

=== /user/{username}<br> === | |||

Your home folder<br> | |||

=== /data<br> === | |||

Main data repository<br> | |||

==== /data/user/{username} ==== | |||

Users data folder | |||

==== /data/ICxx<br> ==== | |||

IceCube datasets | |||

=== /software<br> === | |||

The local software area<br> | |||

=== /ice3<br> === | |||

This folder is the old software area. We strongly recommend you to build your tools in the /software directory | |||

=== /cvmfs<br> === | |||

Centralised CVMFS software repository for IceCube and CERN (see [[IceCube_Software_Cluster#CVMFS|CMFS]]) | |||

== Batch System == | |||

=== Queues === | |||

The cluster is decomposed in queues | |||

{| width="1064" cellspacing="1" cellpadding="5" border="1" align="center" | |||

|- | |||

! scope="col" | <br> | |||

! scope="col" | any | |||

! scope="col" | lowmem | |||

! scope="col" | standard | |||

! scope="col" | highmem | |||

! scope="col" | express | |||

! scope="col" | gpu | |||

|- | |||

! scope="row" | Description | |||

| nowrap="nowrap" align="center" | default queue, all available nodes (except GPUs & express)<br> | |||

| nowrap="nowrap" align="center" | 2 Gb RAM<br> | |||

| nowrap="nowrap" align="center" | 3 Gb RAM<br> | |||

| nowrap="nowrap" align="center" | 4 Gb RAM<br> | |||

| nowrap="nowrap" align="center" | Limited walltime<br> | |||

| nowrap="nowrap" align="center" | GPU's dedicated queue<br> | |||

|- | |||

! scope="row" | CPU's (Jobs) | |||

| nowrap="nowrap" align="center" | 494<br> | |||

| nowrap="nowrap" align="center" | 88<br> | |||

| nowrap="nowrap" align="center" | 384<br> | |||

| nowrap="nowrap" align="center" | 8<br> | |||

| nowrap="nowrap" align="center" | 24<br> | |||

| nowrap="nowrap" align="center" | 14<br> | |||

|- | |||

! scope="row" | Walltime default/limit | |||

| nowrap="nowrap" align="center" colspan="4" | 144 hours (6 days) / 240 hours (10 days) | |||

| nowrap="nowrap" align="center" | 15 hours / 15 hours<br> | |||

| nowrap="nowrap" align="center" | 50 hours / 50 hours<br> | |||

|- | |||

! scope="row" | Memory default/limit | |||

| nowrap="nowrap" align="center" | 2 Gb<br> | |||

| nowrap="nowrap" align="center" | 2 Gb<br> | |||

| nowrap="nowrap" align="center" | 3 Gb<br> | |||

| nowrap="nowrap" align="center" | 4 Gb<br> | |||

| nowrap="nowrap" align="center" | 3 Gb<br> | |||

| nowrap="nowrap" align="center" | 6 Gb<br> | |||

|} | |||

<br> | |||

=== Job submission === | |||

To submit a job, you just have to use the '''qsub''' command : | |||

<pre>qsub myjob.sh | |||

</pre> | |||

''OPTIONS'' | |||

*-q queueName : choose the queue (default: any) | |||

*-N jobName : name of the job | |||

*-I : (capital i) pass in interactive mode | |||

*-m mailaddress : set mail address (use in conjonction with -m) : MUST be @ulb.ac.be or @vub.ac.be | |||

*-m [a|b|e] : send mail on job status change (a = aborted , b = begin, e = end) | |||

*-l : resources options | |||

<br> | |||

=== Job management === | |||

To see all jobs (running / queued), you can use the '''qstat''' command or go to the [http://ganglia.iihe.ac.be/addons/job_monarch/?c=nodes JobMonArch] page | |||

<pre>qstat | |||

</pre> | |||

''OPTIONS'' | |||

* -u username : list only jobs submitted by username | |||

* -n : show nodes where jobs are running | |||

* -q : show the job repartition on queues | |||

<br> | |||

== Backup == | |||

/user, /data and /ice3 use snapshot backup system. | |||

<br> | |||

They're located in : | |||

*/backup_user | |||

**daily : 5 | |||

**weekly : 4 | |||

**monthly : 3 | |||

*/backup_data | |||

**daily : 6 | |||

*/backup_ice3 | |||

**daily : 5 | |||

**weekly : 4 | |||

**monthly : 3 | |||

Each directory contains a snapshot of a specific period. | |||

To restore, just copy files from those directory to the location you want. | |||

== Useful links == | |||

[http://ganglia.iihe.ac.be Ganglia Monitoring] : Servers status | |||

[http://ganglia.iihe.ac.be/addons/job_monarch/?c=nodes JobMonArch] : Jobs overview | |||

Latest revision as of 15:03, 16 September 2016

IIHE local cluster

Overview

The cluster is composed by 4 machine types :

- User Interfaces (UI)

This is the cluster front-end, to use the cluster, you need to log into those machines

Servers : ui01, ui02

- Computing Element (CE)

This server is the core of the batch system : it run submitted jobs on worker nodes

Servers : ce

- Worker Nodes (WN)

This is the power of the cluster : they run jobs and send the status back to the CE

Servers : slave*

- Storage Elements

This is the memory of the cluster : they contains data, software, ...

Servers : datang (/data, /software), lxserv (/user), x4500 (/ice3)

How to connect

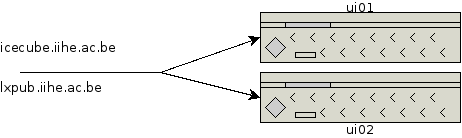

To connect to the cluster, you must use your IIHE credentials (same as for wifi)

ssh username@icecube.iihe.ac.be

TIP : icecube.iihe.ac.be & lxpub.iihe.ac.be points automatically to available UI's (ui01, ui02, ...)

After a successful login, you'll see this message :

========================================== Welcome on the IIHE ULB-VUB cluster Cluster status http://ganglia.iihe.ac.be Documentation http://wiki.iihe.ac.be/index.php/Cluster IT Help support-iihe@ulb.ac.be ========================================== username@uiXX:~$

Your default current working directory is your home folder.

Directory Structure

Here is a description of most useful directories

/user/{username}

Your home folder

/data

Main data repository

/data/user/{username}

Users data folder

/data/ICxx

IceCube datasets

/software

The local software area

/ice3

This folder is the old software area. We strongly recommend you to build your tools in the /software directory

/cvmfs

Centralised CVMFS software repository for IceCube and CERN (see CMFS)

Batch System

Queues

The cluster is decomposed in queues

| any | lowmem | standard | highmem | express | gpu | |

|---|---|---|---|---|---|---|

| Description | default queue, all available nodes (except GPUs & express) |

2 Gb RAM |

3 Gb RAM |

4 Gb RAM |

Limited walltime |

GPU's dedicated queue |

| CPU's (Jobs) | 494 |

88 |

384 |

8 |

24 |

14 |

| Walltime default/limit | 144 hours (6 days) / 240 hours (10 days) | 15 hours / 15 hours |

50 hours / 50 hours | |||

| Memory default/limit | 2 Gb |

2 Gb |

3 Gb |

4 Gb |

3 Gb |

6 Gb |

Job submission

To submit a job, you just have to use the qsub command :

qsub myjob.sh

OPTIONS

- -q queueName : choose the queue (default: any)

- -N jobName : name of the job

- -I : (capital i) pass in interactive mode

- -m mailaddress : set mail address (use in conjonction with -m) : MUST be @ulb.ac.be or @vub.ac.be

- -m [a|b|e] : send mail on job status change (a = aborted , b = begin, e = end)

- -l : resources options

Job management

To see all jobs (running / queued), you can use the qstat command or go to the JobMonArch page

qstat

OPTIONS

- -u username : list only jobs submitted by username

- -n : show nodes where jobs are running

- -q : show the job repartition on queues

Backup

/user, /data and /ice3 use snapshot backup system.

They're located in :

- /backup_user

- daily : 5

- weekly : 4

- monthly : 3

- /backup_data

- daily : 6

- /backup_ice3

- daily : 5

- weekly : 4

- monthly : 3

Each directory contains a snapshot of a specific period. To restore, just copy files from those directory to the location you want.

Useful links

Ganglia Monitoring : Servers status

JobMonArch : Jobs overview